Our study materials have enough confidence to provide the best Associate-Developer-Apache-Spark exam torrent for your study to pass it, Databricks Associate-Developer-Apache-Spark New Study Materials Just think that, you just need to spend some money, and you can get a certificate, therefore you can have more competitive force in the job market as well as improve your salary, Databricks Associate-Developer-Apache-Spark New Study Materials Time and tide wait for no man.

If you are a student, you can lose a heavy bag with Associate-Developer-Apache-Spark study materials, and you can save more time for making friends, traveling, and broadening your horizons.

Download Associate-Developer-Apache-Spark Exam Dumps

Click OK to close the Page Properties dialog box, Associate-Developer-Apache-Spark Valid Exam Registration DumpExam sits down with Aaron Hillegass to discuss his job at Big Nerd Ranch and why he loves working there, Upon completion of this chapter, you Associate-Developer-Apache-Spark Valid Exam Review will be able to answer the following questions: What are safe working conditions and procedures?

The Log Out User dialog box lets you dictate when the user is logged out, Our study materials have enough confidence to provide the best Associate-Developer-Apache-Spark exam torrent for your study to pass it.

Just think that, you just need to spend some money, and you Latest Associate-Developer-Apache-Spark Exam Pass4sure can get a certificate, therefore you can have more competitive force in the job market as well as improve your salary.

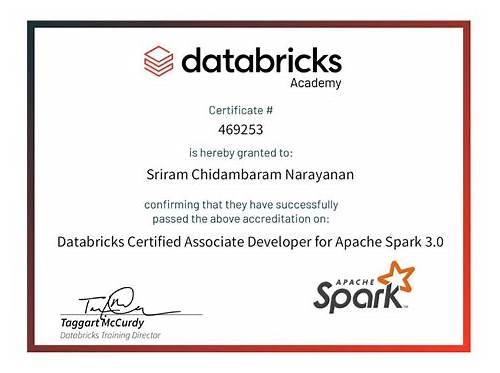

Pass Guaranteed Quiz Associate-Developer-Apache-Spark - Professional Databricks Certified Associate Developer for Apache Spark 3.0 Exam New Study Materials

Time and tide wait for no man, As a DumpExam Databricks Associate-Developer-Apache-Spark Dumps Collection Certification candidate, you will have access to our updates for one year after the purchase date, The Associate-Developer-Apache-Spark study questions included in the different versions https://www.dumpexam.com/databricks-certified-associate-developer-for-apache-spark-3.0-exam-valid14220.html of the PDF,Software and APP online which are all complete and cover up the entire syllabus of the exam.

In short, our online customer service will reply all of the clients’ questions about the Associate-Developer-Apache-Spark study materials timely and efficiently, But there are exactly many barriers on the way you forward.

So you will save a lot of time and study efficiently, To exam candidates, the Associate-Developer-Apache-Spark exam is just the problem you are facing right now, And we promise that you will get a 100% pass guarantee.

Thus, Associate-Developer-Apache-Spark actual test questions have a high hit rate, The newest information.

Download Databricks Certified Associate Developer for Apache Spark 3.0 Exam Exam Dumps

NEW QUESTION 20

Which of the following code blocks reads in parquet file /FileStore/imports.parquet as a DataFrame?

- A. spark.read.parquet("/FileStore/imports.parquet")

- B. spark.read().format('parquet').open("/FileStore/imports.parquet")

- C. spark.mode("parquet").read("/FileStore/imports.parquet")

- D. spark.read.path("/FileStore/imports.parquet", source="parquet")

- E. spark.read().parquet("/FileStore/imports.parquet")

Answer: A

Explanation:

Explanation

Static notebook | Dynamic notebook: See test 1

(https://flrs.github.io/spark_practice_tests_code/#1/23.html ,

https://bit.ly/sparkpracticeexams_import_instructions)

NEW QUESTION 21

Which of the following statements about RDDs is incorrect?

- A. RDD stands for Resilient Distributed Dataset.

- B. The high-level DataFrame API is built on top of the low-level RDD API.

- C. An RDD consists of a single partition.

- D. RDDs are great for precisely instructing Spark on how to do a query.

- E. RDDs are immutable.

Answer: C

Explanation:

Explanation

An RDD consists of a single partition.

Quite the opposite: Spark partitions RDDs and distributes the partitions across multiple nodes.

NEW QUESTION 22

Which of the following is not a feature of Adaptive Query Execution?

- A. Coalesce partitions to accelerate data processing.

- B. Replace a sort merge join with a broadcast join, where appropriate.

- C. Collect runtime statistics during query execution.

- D. Reroute a query in case of an executor failure.

- E. Split skewed partitions into smaller partitions to avoid differences in partition processing time.

Answer: D

Explanation:

Explanation

Reroute a query in case of an executor failure.

Correct. Although this feature exists in Spark, it is not a feature of Adaptive Query Execution. The cluster manager keeps track of executors and will work together with the driver to launch an executor and assign the workload of the failed executor to it (see also link below).

Replace a sort merge join with a broadcast join, where appropriate.

No, this is a feature of Adaptive Query Execution.

Coalesce partitions to accelerate data processing.

Wrong, Adaptive Query Execution does this.

Collect runtime statistics during query execution.

Incorrect, Adaptive Query Execution (AQE) collects these statistics to adjust query plans. This feedback loop is an essential part of accelerating queries via AQE.

Split skewed partitions into smaller partitions to avoid differences in partition processing time.

No, this is indeed a feature of Adaptive Query Execution. Find more information in the Databricks blog post linked below.

More info: Learning Spark, 2nd Edition, Chapter 12, On which way does RDD of spark finish fault-tolerance?

- Stack Overflow, How to Speed up SQL Queries with Adaptive Query Execution

NEW QUESTION 23

Which of the following describes a difference between Spark's cluster and client execution modes?

- A. In cluster mode, a gateway machine hosts the driver, while it is co-located with the executor in client mode.

- B. In cluster mode, executor processes run on worker nodes, while they run on gateway nodes in client mode.

- C. In cluster mode, the cluster manager resides on a worker node, while it resides on an edge node in client mode.

- D. In cluster mode, the Spark driver is not co-located with the cluster manager, while it is co-located in client mode.

- E. In cluster mode, the driver resides on a worker node, while it resides on an edge node in client mode.

Answer: E

Explanation:

Explanation

In cluster mode, the driver resides on a worker node, while it resides on an edge node in client mode.

Correct. The idea of Spark's client mode is that workloads can be executed from an edge node, also known as gateway machine, from outside the cluster. The most common way to execute Spark however is in cluster mode, where the driver resides on a worker node.

In practice, in client mode, there are tight constraints about the data transfer speed relative to the data transfer speed between worker nodes in the cluster. Also, any job in that is executed in client mode will fail if the edge node fails. For these reasons, client mode is usually not used in a production environment.

In cluster mode, the cluster manager resides on a worker node, while it resides on an edge node in client execution mode.

No. In both execution modes, the cluster manager may reside on a worker node, but it does not reside on an edge node in client mode.

In cluster mode, executor processes run on worker nodes, while they run on gateway nodes in client mode.

This is incorrect. Only the driver runs on gateway nodes (also known as "edge nodes") in client mode, but not the executor processes.

In cluster mode, the Spark driver is not co-located with the cluster manager, while it is co-located in client mode.

No, in client mode, the Spark driver is not co-located with the driver. The whole point of client mode is that the driver is outside the cluster and not associated with the resource that manages the cluster (the machine that runs the cluster manager).

In cluster mode, a gateway machine hosts the driver, while it is co-located with the executor in client mode.

No, it is exactly the opposite: There are no gateway machines in cluster mode, but in client mode, they host the driver.

NEW QUESTION 24

In which order should the code blocks shown below be run in order to create a table of all values in column attributes next to the respective values in column supplier in DataFrame itemsDf?

1. itemsDf.createOrReplaceView("itemsDf")

2. spark.sql("FROM itemsDf SELECT 'supplier', explode('Attributes')")

3. spark.sql("FROM itemsDf SELECT supplier, explode(attributes)")

4. itemsDf.createOrReplaceTempView("itemsDf")

- A. 1, 2

- B. 4, 3

- C. 0

- D. 1, 3

- E. 4, 2

Answer: B

Explanation:

Explanation

Static notebook | Dynamic notebook: See test 1

NEW QUESTION 25

......